On paper, a scripted module can check every box. The scenario holds up, the dialogue feels right, the experience seems engaging. Sometimes even genuinely successful.

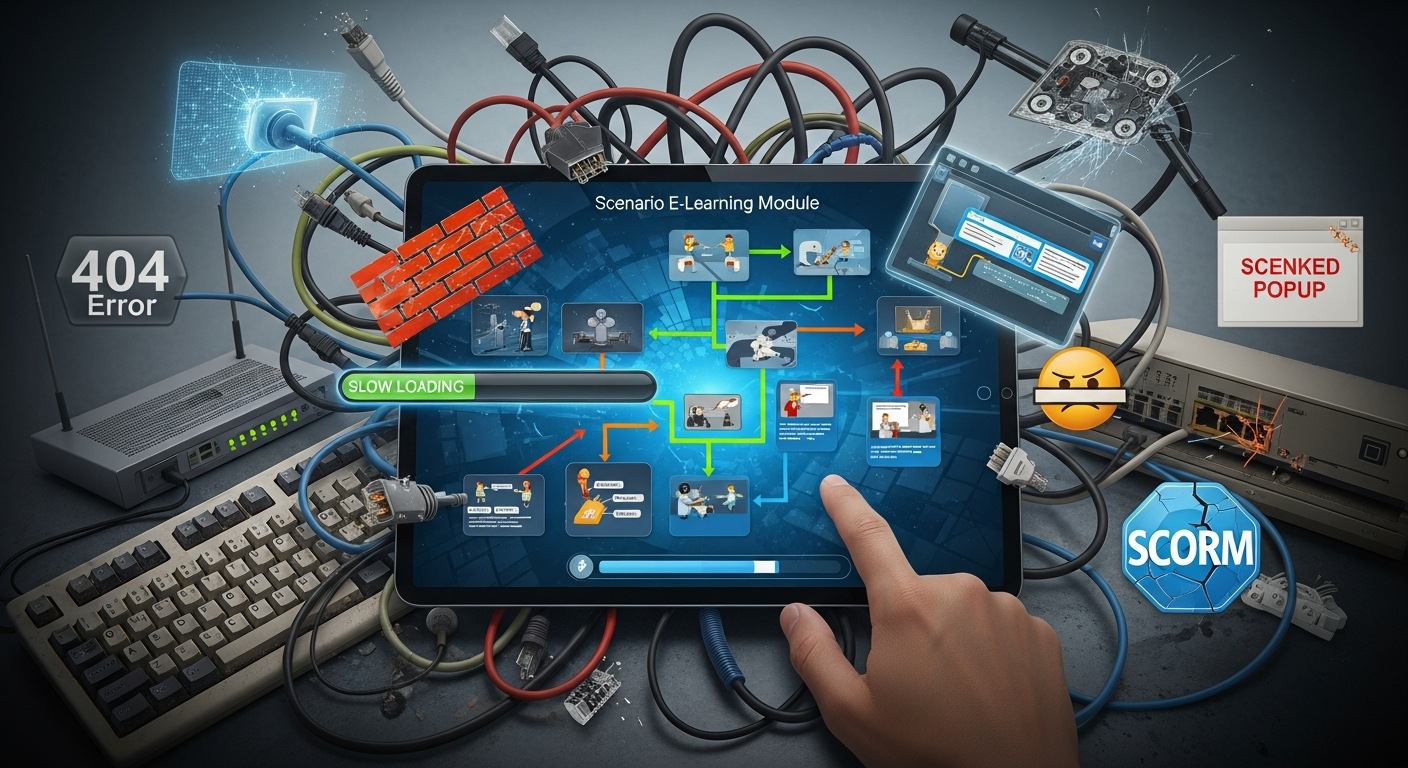

And then comes the moment of scripted e-learning deployment. That’s where, often, things get complicated—not at the level of the idea, but at the level of reality. The field, the usage patterns, the machines, the LMS, network constraints, learners’ habits, manager involvement (or not), everything that isn’t always visible during design.

That’s also where the difference plays out between a module that produces something tangible and a module that promises a lot but leaves a fuzzy impression. A score that doesn’t get reported correctly. A resume that goes sideways. A scene that gets misunderstood. Audio that doesn’t trigger. A browser that’s too locked down. A nonexistent launch communication. Taken separately, these are “small” problems. Together, they’re enough to break the effect.

Before we even talk about publishing, there are three locks to put in place:

- what we want people to learn, concretely;

- the real-world conditions in which the module will run;

- the way we’re going to introduce it, support it, and accompany it.

When those three foundations are shaky, the rest rarely follows.

Scripted e-learning deployment: it’s not just putting a SCORM file online

We’re talking here about simulations, serious games, role plays, branching pathways. Modules where the learner doesn’t just click “next”: they choose, they test, they make mistakes, they try again, they influence—depending on the setup—what happens next.

In this kind of format, publishing a package is only the beginning. What you really need to verify is something else: does the experience hold up once it’s out of the workshop? Does it remain readable, stable, trackable? Does it work without surprises on the target LMS? And if we need to evolve it in six months, will everything break at the slightest change?

That’s what makes these modules more sensitive than a classic linear course. In a standard module, the path is closed, controlled end to end. Here, it isn’t. A branching point that seems trivial quickly creates multiple realities to manage: more tests, more rules, more maintenance, more variables, more risk of inconsistency.

Add to that a point that’s often minimized: in these setups, we frequently assess fine-grained skills. Posture. Communication. Discernment. Customer relationships. Management. So if tracking tells the story poorly of what happened, or the interface blurs the instruction, you end up attributing to the learner a weakness that may actually come from the module itself.

This is a common case, including with well-scripted modules designed with tools like VTS Editor: the writing is solid, sometimes excellent, but proof of impact is decided elsewhere. At launch and go-live.

What we really expect at the moment of a scripted e-learning launch

In general, expectations are very concrete. We want to avoid visible hiccups on day one. We want the LMS to return data that makes sense. We want to distinguish between “the learner finished” and “the learner demonstrated something satisfactory.” We also want to be able to defend the program in front of HR, the business, management, sometimes compliance. And if possible, we want to stop starting from scratch with every new project.

The same questions come up, over and over:

- how to prevent learners from getting stuck in the first few minutes;

- how to get LMS statuses that actually mean something;

- how to define success in a non-linear module;

- how to prove we’re measuring more than a completion rate;

- how to turn a good launch into a reusable method.

The ten traps that follow stem directly from that.

Objectives, gamification, non-linearity: 4 pitfalls that weaken scripted e-learning deployment

Launching without an observable objective

An immersive module can be appealing and still prove nothing useful.

If the objective stays vague, it’s almost impossible to demonstrate a real effect. “Raising awareness of customer relationships,” for example, is an intention. Not a measurable objective.

When you formulate something like: “identify the customer’s emotion, rephrase before proposing a solution, without promising an unrealistic timeline,” then you have something. You can observe behavior. So you can assess it. So you can track it. So you can talk about impact with a minimum level of rigor.

Before going into production, it’s better to explicitly frame:

- the target behavior;

- the exact moment it is put to the test in the scenario;

- the chosen indicator;

- the expected data in the LMS.

Simple example: in an interview simulation, active listening is tested on three key decisions. Two appropriate answers out of three: the module switches to “Passed.” Below that: remediation, then a new attempt according to the same rule. Nothing sophisticated. But it’s clear, defensible, usable.

Rewarding the game mechanic instead of reinforcing the skill

Gamification isn’t the problem. The issue is decorative gamification. The kind that adds a score, badges, or speed with no solid link to learning.

As soon as the setup mainly rewards speed, efficient clicking, or point accumulation, it starts training something other than what it claims to develop. Learners notice quickly. They game the system. They aim for the score. Not necessarily the right move.

A playful mechanic has a pedagogical value if, and only if, it helps do three things better:

- clarify what’s expected;

- make consequences visible;

- support progression.

Take a conflict-management module. If the learner abruptly cuts the other person off, the scene should make them feel it right away: stiffness, shutdown, rising tension, loss of cooperation. Just removing a few points isn’t enough. It’s too abstract. A situational feedback, embedded in the scene, teaches much more.

In VTS Editor, that often comes through competency-based scores, contextualized feedback, sometimes badges—but only when they mark a real learning milestone, not just a surface effect.

Multiplying branches until the module becomes unmanageable

Non-linearity is impressive. It sometimes reassures certain stakeholders too. The more branches there are, the more the module “feels rich,” “feels advanced.”

Except that, in real life, it can quickly become a false good idea.

Each additional branch adds debt: more cases to test, more combinations, more maintenance, more gray areas, more chances for an inconsistent state to show up somewhere. And often, for a fairly modest learning gain.

So the right question isn’t: how many branches can we create? The right question is: what non-linearity actually brings something to learning?

Very often, a few structuring decisions are enough. The rest can be handled through more restrained micro-variations: a different tone, a brief consequence, a character reaction, feedback targeted at a specific skill.

Two habits make everything far more robust:

- adopt clear naming conventions for variables, states, exits, and competencies;

- plan a fallback path when an unexpected state occurs.

Because a learner stuck in a scene that can’t be continued is the kind of detail that instantly ruins the experience. A simple exit, even imperfect, is better than a wall.

Confusing a learning problem with a UX problem

Immersion doesn’t remove the need to guide.

In a scripted module, the learner shouldn’t spend long seconds wondering what you expect from them, where to click, why their answer is considered appropriate or not. When that happens, you’re often looking at a UX problem, not a core difficulty.

It’s a classic drift: you attribute to the skill what actually comes from the interface, instructions, pacing, or level of help.

If 60% of users stumble in the same place, it’s better to start by checking whether the task is understood.

Example: an exploration scene asks the learner to identify two weak signals in an office. If the mission is half-stated, some will click randomly, others won’t dare to do anything, and others will think they need to open everything. The right reflex is to state the objective clearly, then surface a progressive hint if nothing happens. You preserve autonomy without creating unnecessary frustration.

Pacing also plays a huge role. A short piece of info, an action, a feedback, then a breath. If there’s only exposition, or if the action comes too late, attention drops. And dropout rises, quietly but surely.

To frame UX and cognitive load, the recommendations of the Nielsen Norman Group remain a solid foundation.

Testing, SCORM, media: 3 key steps for reliable scripted e-learning deployment

Testing on your own machine and believing the work is done

This one is expensive. Often more expensive than you imagine.

On the designer’s workstation, everything works. The module launches, media plays, buttons respond, the score reports. Perfect.

Then, once published, problems show up: proxy, VPN, locked-down browser, aging machines, autoplay policy, slow network in a branch office, no headset, audio blocked, inconsistent performance. And then, surprise (or not).

The design workstation is not a truth environment. It’s a comfortable environment.

Even a fairly light test plan should cover, at minimum:

- module launch and loading;

- sensitive interactions: choices, clicks, timers, resume;

- audio and video;

- end of the course and the associated statuses;

- resume after interruption.

And above all, these tests need to be done in the target LMS. Not only with a local SCORM player. A local player can reassure you; it does not validate deployment.

SCORM and LMS settings done too late, or “close enough”

In this type of project, the module is not only a learning object. It also becomes tracking data, sometimes HR data. From there, approximation gets expensive in credibility.

If the LMS shows “Completed” when the learner never reached the expected level, or if the reported score doesn’t match the experience lived, trust erodes quickly. Then good luck defending the reporting.

The success rule must therefore be decided before delivery. Not at the end, in a rush, when you need to “report something.”

You need to explicitly decide:

- what triggers completion;

- what triggers pass or fail;

- which score, exactly, is interpreted;

- what happens in case of resume.

Last scene? Last checkpoint? Restart from the beginning? Each of these choices has direct consequences on data readability.

Then you need to test in the target LMS at least four situations:

- a successful path;

- a failed path;

- a session interrupted then resumed;

- a session abandoned (abrupt close or browser exit).

For the standard framework, the reference remains ADL documentation on SCORM: https://adlnet.gov.

Underestimating the weight of media

A module can look clean and still degrade, almost silently, because of its media.

That’s often where the most irritating annoyances hide: loading times that are too long, choppy video, out-of-sync audio, sound levels that vary from one scene to the next, a package that’s too heavy. Nothing spectacular, but more than enough to break immersion and increase drop-off.

The simplest thing is to standardize early.

A few useful guidelines:

- for video, aim for the right resolution, not the biggest;

- for audio, harmonize voice and background levels;

- for images, compress properly and avoid unnecessarily heavy formats.

A customer-relationship simulation doesn’t need raw “cinema” quality. It needs smoothness, readability, clean signals.

With VTS Editor as with other authoring tools, following format recommendations also limits differences across machines. And it’s better to test at least on two hardware configurations: standard headset and computer speakers, for example.

Security, pilot, communication: 3 levers to succeed in deploying a scripted module

Discovering network and security constraints after launch

Many incidents have nothing to do with the scenario. Or even with the LMS. They come from the corporate context, plain and simple.

External links blocked. Popups forbidden. API calls filtered. Remote resources inaccessible via VPN. Firewalls stricter depending on the site. A module can run perfectly at headquarters and break as soon as you leave that environment.

Conclusion: IT needs to be involved early. Not when tickets start coming in.

A clear list of dependencies already avoids a lot of back-and-forth:

- required domains;

- popups to allow, if needed;

- external calls;

- specific constraints related to the VPN.

Two points deserve almost systematic attention. First, popups are often blocked by default. Second, VPN can severely degrade loading times. If your target audience works remotely, that case must be tested for real, not assumed.

And when an external resource is critical, it’s better to plan a backup: a version embedded in the module, or at least a clear fallback message explaining how to access the information another way.

Skipping a field pilot

A pilot isn’t there to look good on a project plan. It’s often the moment when the module finally meets reality.

And reality doesn’t read design intentions.

A good pilot doesn’t really try to “validate the solution overall.” It’s mainly used to check three simple things:

- job credibility;

- clarity of use;

- absence of major friction.

It doesn’t need to be endless, but it has to be representative. Mixing novice and experienced profiles helps a lot. Adding at least one constrained situation (old workstation, weak network, locked environment) is even recommended. That’s often where weaknesses show up.

To gather useful feedback, a few questions are enough:

- when did you hesitate about the expected action;

- what seemed not credible to you;

- did you encounter a technical problem;

- did the feedback help you improve.

And you have to look at the traces. Really. A spike in drop-off at a specific spot often speaks before everything else.

To support the role of testing in real conditions and learning by doing, you can also cite research work on the effectiveness of simulations in training, for example:

- Freeman et al. (2014), Active learning increases student performance in science, engineering, and mathematics (PLOS ONE)

- Sitzmann (2011), A meta-analytic examination of the instructional effectiveness of computer-based simulation games (Computers & Education)

Doing a silent launch

Even an excellent module can go almost unnoticed if it’s launched without animation, without framing, without relays.

It’s a point that’s often neglected, even though scripted e-learning precisely has real presentation potential: you can position it as a practice space, a risk-free rehearsal, a place where you’re allowed to try, fail, and try again. Not as a simple disguised assessment.

Still, you have to explain it.

The minimal animation needs to make four elements visible:

- why this module exists;

- how much time it takes;

- when it must be completed;

- who to contact in case of an issue.

Two levers work particularly well. First, a managerial relay that presents the program as useful, not as one more constraint. Second, visible support that’s simple to access: a clearly identified person, a clear channel, not a maze. Without that, many learners drop at the first obstacle, even a minor one.

Deployment checklist for scripted e-learning (simple and reusable)

The real challenge isn’t only to succeed at a launch. It’s also to make the next ones less fragile, faster, more reliable.

And for that, you don’t need an overengineered machine. A short, clear checklist often does the job.

Learning design

- validated observable objectives;

- defined success criteria;

- essential feedbacks in place.

UX

- instructions tested outside the project team;

- progressive aids planned;

- proper balance between passive time and active time.

Technical

- tests run in the real environment;

- optimized media;

- validated resume.

SCORM / LMS

- completion, success, and score verified in the target LMS;

- successful, failed, interrupted, and abandoned cases tested.

Security / IT

- validated dependencies: proxy, firewall, popups, domains;

- fallback solution planned for external resources.

Deployment

- field pilot conducted;

- communication ready;

- support ready too.

Resources and useful links to frame a scripted e-learning deployment

When you need to justify a technical or design decision, it’s better to rely on solid sources than internal habits.

- ADL, for SCORM standards and e-learning documentation: https://adlnet.gov

- Nielsen Norman Group, for UX fundamentals: https://www.nngroup.com

To go further on the Serious Factory side (internal links):

- Design software for gamified E-Learning modules made easy with AI

- Deploy your e‑learning courses with our LMS platform

- Interactive Role Play

- Client Cases – Discover their success with Virtual Training Suite

FAQ Scripted e-learning deployment

How do you distinguish a module that “passed” from a module that was only “completed” in the LMS?

You have to set a clear rule. “Completed” when the learner reaches an end of the path. “Passed” only if they reach the expected threshold, whether it’s an overall score or a level on certain competencies. Then, this logic must be tested in the LMS with at least one passing case and one failing case.

What are the minimum tests before publishing a SCORM simulation or serious game?

At minimum: launch, loading, critical interactions, audio/video, end of module, reporting of completion and score, then resume after interruption. And these checks must be done in the target LMS, not only locally.

Why does the module work on my machine but not for learners?

Because the problem is often tied to the real environment: proxy, firewall, VPN, mandated browser, less powerful workstation, audio playback policies, local restrictions. Until tests are done under target conditions, the gap remains invisible.

How do you prevent a learner from getting stuck in an interactive scene?

By working on UX first: explicit instructions, a clear mission, progressive hints in case of inactivity, and a fallback path when an unexpected state occurs. A large share of blocks comes from poorly stated implicit expectations.

How do you make the next deployments faster and more reliable?

By formalizing a lightweight method: shared checklist, short but representative field pilot, systematic LMS validation, then continuous improvement based on feedback and data.