We need to stop telling ourselves stories: slapping points, two badges, and a progress bar onto a module is no longer enough to create real learning momentum. Advanced e-learning gamification—the real kind—happens somewhere else: in the choices you give the learner, in the uncertainty you’re willing to create, and above all in what comes next: the consequences.

The principle is simple. You put the person in a credible situation. You push them to make a call. Then you show them what their decision produces—right away or a bit further down the line. You’re no longer in the “playful decor laid over classic content” approach; you’re in training, in action, in something that builds usable reflexes.

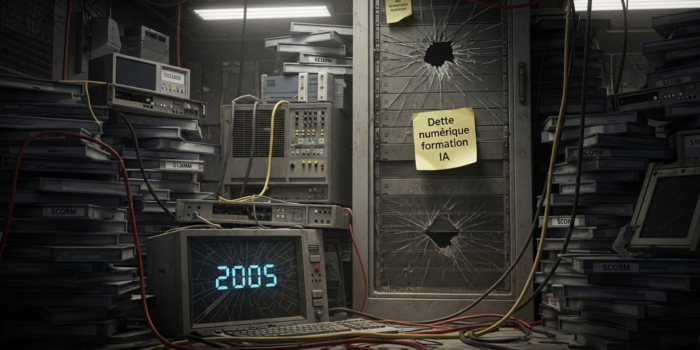

And there’s one point that’s often underestimated: this type of experience doesn’t necessarily require a massive development pipeline anymore. With visual scripting tools (VTS Editor by Serious Factory, for example), you can build interactive scenarios, track skills, then export to SCORM for an LMS—all without writing code. Put simply: it makes this type of pedagogy far more accessible.

Advanced e-learning gamification: what we really expect from training today

Putting a module online is no longer an end in itself. Training teams see it very quickly. What they’re asked is something else: does it change anything, concretely? On the job. In decisions. In day-to-day behaviors.

Leadership wants visible effects, not just a nice completion rate. Managers are looking for observable changes: better posture, fewer mistakes, more discernment. And learners, whether we like it or not, compare your learning paths to all the digital experiences they already have elsewhere. If it’s heavy, linear, sluggish, they feel it immediately.

A classic e-learning module remains useful for conveying a framework, reminding rules, structuring a topic. But as soon as you’re dealing with a finer skill (arbitrating, observing, handling tension, holding a posture), its limits show up quickly.

Research says roughly the same thing. Sitzmann’s (2011) meta-analysis shows that simulations and serious games improve learning and retention, notably thanks to practice, feedback, and active engagement. Between “I know what I should do” and “I’m able to do it at the right moment,” there’s often a world—and that world is built through action. https://doi.org/10.1111/j.1744-6570.2011.01204.x

Advanced e-learning gamification: what are we talking about, exactly?

Advanced gamification in e-learning is not simply a more “fun” version of training. Framed that way, the topic misses its target.

The real challenge is to build a coherent instructional framework where the scenario, interactivity, and consequences move forward together. The goal is not to hold attention for a few more minutes. It’s rather about working judgment, stress-testing a response, making a behavior visible.

That’s where the gap becomes clear with more basic gamification. In its most common form, you add progress markers: score, badge, level, reward. It can help. But if the learner has nothing real to arbitrate, the effect remains superficial.

A more advanced approach runs on a different loop: context, decision, impact, feedback, new attempt. You don’t learn because you viewed content. You learn because you acted—and very often because you made a mistake.

A simple example, on compliance. A traditional course will say: “Don’t accept gifts.” Great. Clear message. Maybe remembered. But in a more lifelike scenario, the learner gets a message from an insistent supplier, sees that a salesperson wants to preserve the relationship, discovers a manager is CC’d, and the timing is tight. Now, immediately, it becomes something else. Should you reply? Wait? Check the procedure? Ask for advice? Each option shifts the rest of the story. It’s that tension that truly trains behavior, not the final badge.

To go deeper into what we mean by experiential learning (learning by doing), a solid foundation remains Kolb’s learning model, which explains why action and reflection on action improve skill acquisition. https://psycnet.apa.org/record/1984-05715-000

Advanced e-learning gamification: useful interactivity, not a gimmick

An interaction only has value if it serves a clear instructional intent. Otherwise, it clutters. It can give an impression of richness, sometimes, but it scatters more than it helps.

The question to ask is simple: what is this interaction for? To decide? To observe? To memorize? To test a posture? If the answer remains fuzzy, there’s a strong chance the element is one too many.

Advanced e-learning gamification: when a scenario actually becomes useful

A good scenario almost never relies on the caricatured opposition between one glowing correct answer and one grotesque wrong answer. In real life, bad choices often have immediate upsides. They reassure. They avoid conflict. They save time—at least on the surface. That’s precisely why they trap you.

Take a corrective performance conversation in management. We can imagine three postures:

- use an understanding tone but stay too vague, which calms things down without setting a framework;

- go head-on into the clash, at the risk of creating immediate resistance;

- speak clearly, factually, firmly, while still leaving space for the other person.

What you’re working on here isn’t a “school” answer. It’s a way to hold the relationship without losing sight of the goal.

Feedback: what really helps you improve

A “correct” or “incorrect” is rarely very interesting. It closes reflection more than it opens it.

Useful feedback answers three questions instead:

- what did you choose?

- what does that choice produce here?

- what would need adjusting next time?

In a customer relationship scenario, this can come through a visible reaction from the character: the tone hardens, patience drops, or conversely an opening appears. Then a brief comment sheds light on the mechanism. You can add a micro-remediation if the mistake repeats. The idea isn’t just to correct—it’s to make the effect of the choice felt.

Replayability: practice rather than consume

A module you go through once gets consumed. A module you can replay with a few variations gets practiced.

No need for a complex architecture. Small variations are often enough:

- several plausible branches based on key decisions;

- a bit of randomness in the context or an interlocutor profile;

- elements to explore before choosing.

In safety, for example, asking the learner what they inspect in a workshop (PPE, barriers, signage, storage areas) mobilizes observation. You’re no longer only asking them to remember; you’re asking them to act.

Measuring impact, without turning training into a courtroom

The question comes up constantly, and it’s legitimate: how do you prove effectiveness without tipping the experience into a permanent control logic?

The answer isn’t to measure less. It’s to measure better.

A useful assessment doesn’t only serve to report a final score. It should highlight the learner’s blind spots, help training leaders spot remediation needs, and enable HR to talk in observable behaviors—not just grades.

Move beyond a single score

An overall score is simple, but it simplifies too much.

Scoring by competency offers a more usable reading. In a module on a managerial interview, you can track separately:

- communication;

- listening;

- managerial posture;

- emotional management.

A choice can improve listening while weakening posture, for example if the manager leaves too much space without ever reframing. That kind of tension looks more like real life.

Badges and points: provided they actually mean something

The badge, in itself, isn’t shocking. What’s problematic is the emptiness it can cover up.

If it materializes a clear behavior, it becomes useful:

- “Active Listening” if the learner paraphrases before proposing a solution;

- “Compliance Rigor” if they check the procedure before escalating at the right level;

- “On-the-Job Safety” if they spot several critical risks without a major blind spot.

On the other hand, a simple completion badge rarely convinces. Learners aren’t fooled: you’re congratulating them for getting through the module, not for developing a skill.

Designing advanced e-learning gamification without coding: a simple method

The real challenge isn’t multiplying interactions. The hardest part is developing a solid method you can reuse from one module to the next without starting over from scratch.

Visual block-based authoring tools, like VTS Editor by Serious Factory, make this work more approachable: you connect scenes, define branches, track simple variables, then deliver it all via a SCORM export.

Start from the expected on-the-job behavior

The right starting question is very concrete: what needs to change starting Monday morning?

From there, you draft a few observable criteria. Not fifteen. Three to five is enough.

For a corrective performance conversation:

- clearly announce the objective;

- rely on facts rather than judgments;

- leave room for a response and paraphrase;

- end with a clear commitment.

These criteria frame the writing, scoring, and debrief. They prevent the project from turning into a patchwork.

Break it into learning scenes, not screens

A scene isn’t just a backdrop or a series of screens. It’s an instructional unit.

The simplest approach is to define one intent per scene: “spot a risk,” “surface an objection,” “ask an open question,” “reframe without attacking.” Only then do you choose the interactive mechanic that fits.

A few formats work particularly well:

- choice-based dialogue for interpersonal skills;

- clickable hotspots for observation or inspection;

- very short quizzes, integrated into the scenario logic, to check a knowledge point;

- drag-and-drop or matching to organize a process.

The classic trap: varying mechanics just to create an impression of variety. You vary when the competency changes, not to “fill” a screen.

Add a light adaptive logic

This is often where gamification becomes interesting: because the module starts to react—even modestly—to the learner’s actions.

This logic can stay simple:

- indicators (flags) to remember an action, for example “consulted the procedure”;

- counters to track attempts and trigger progressive help;

- score thresholds to unlock a more advanced scene;

- a countdown when urgency is part of the situation.

In other words, no need to build a complicated contraption. A few well-thought-out states are often enough to give the impression that the module responds to what the user does.

Use media as support, not decoration

Realism doesn’t necessarily depend on costly filming. It often comes down to accurate details: an expression that closes off after an awkward move, a workshop sound, a sound alert, a very short video showing a gesture.

If the media helps you understand, choose, retain, it has a function. Otherwise, it weighs things down.

Prototype early, fix fast, then standardize

Having a prototype tested by someone outside the project is often very effective. Flaws surface quickly:

- an instruction the team considers crystal clear but is fuzzy for the user;

- two options perceived as nearly identical;

- feedback that’s too long;

- a moment where people click randomly because the stakes aren’t clear.

Then, to produce at larger scale, you can rely on reusable templates: briefing, decision, consequence, remediation, debrief. In a tool like VTS Editor, this can correspond to groups of blocks you reuse from module to module, and the time savings become tangible.

Example of an interactive scenario: practicing a corrective performance conversation

A frequent question: concretely, what does it look like without a development team?

Let’s imagine a 15-to-20-minute simulation. The learner plays the role of a manager. Across from them, an employee arrives defensive, downplays their lateness, justifies themselves, sometimes shifts the blame to the context. The manager has to reframe. Without humiliating. Without sugarcoating. Without letting it slide.

The learning path can be organized like this:

- a briefing sets the context and objective;

- three dialogue sequences structure the conversation: opening, handling an emotional objection, conclusion;

- after each important choice, the interlocutor’s reaction becomes visible: shutdown, tension, calming, commitment;

- if certain mistakes repeat, a short remediation appears: resource, reminder, mini-debrief;

- the end of the module offers a competency-based readout, with targeted recommendations.

The assessment can track four axes:

- communication;

- listening;

- managerial posture;

- emotional management.

There’s no need to grade every sentence. It’s better to focus on the decisions that structure the exchange. That way you avoid the “disguised exam” effect, and the final summary gains readability.

With VTS Editor, this type of module can be built by an instructional designer based on:

- scenes organized in a visual graph;

- dialogue blocks, choices, and quizzes;

- building blocks to manage flags, counters, scores, and checks;

- media and animations useful to immersion;

- then a SCORM export for integration into the LMS.

To see what this looks like in real conditions, here is a relevant customer case (immersive training and “risk hunt”): Manpower Academy – Customer Case – Serious Factory.

Enterprise rollout: what you can actually track

Another question comes up often: is it compatible with our LMS constraints and our IT environment?

With a SCORM export, you retain classic tracking:

- progress;

- completion;

- pass or fail;

- overall score.

But the value doesn’t stop there. If the scoring was designed by competency in the authoring tool, you keep a finer reading, useful for remediation, coaching, or targeting complementary learning paths.

Useful references:

- SCORM, via ADL: https://adlnet.gov/projects/scorm/

- A research synthesis on serious games and learning, often cited in academic work: Wouters et al. (2013) (meta-analysis) https://doi.org/10.1037/a0031311

Frequently asked questions about advanced e-learning gamification

What’s the difference between a serious game and a gamified e-learning module?

A gamified module takes a training structure and adds game-inspired mechanics: choices, scoring, feedback, progression. A serious game, on the other hand, is designed from the start as a game experience serving an instructional objective. Generally, it goes further on immersion, narrative, and simulation logic. In practice, the boundary mostly depends on the level of simulation, non-linearity, and freedom of exploration.

Can this type of solution be designed without a developer?

Yes. Provided you use an authoring tool that enables visual scripting. Tools like VTS Editor let you assemble scenes, configure interactions, and add simple logic (flags, scores, counters) without programming.

Which topics work best?

The most suitable topics are often those where mistakes are costly, or where posture changes everything:

- management and HR;

- customer relationship;

- safety and prevention;

- compliance;

- quality and adherence to procedures.

As soon as you need to observe, arbitrate, react, hold a line in a somewhat ambiguous situation, the approach is highly relevant.

How do you avoid the gimmick effect with points and badges?

By systematically tying rewards to observable behaviors. Every point must correspond to a decision that matters. Every badge must signal something clear. Fewer rewards, more meaning.

How do you quickly get internal stakeholders on board?

What’s most convincing is usually not a big speech. It’s a prototype. Pick a high-stakes situation, isolate three key decisions, track two competencies, then have the committee test the scenario. Ten minutes of a well-designed experience often wins more buy-in than a thirty-slide deck.