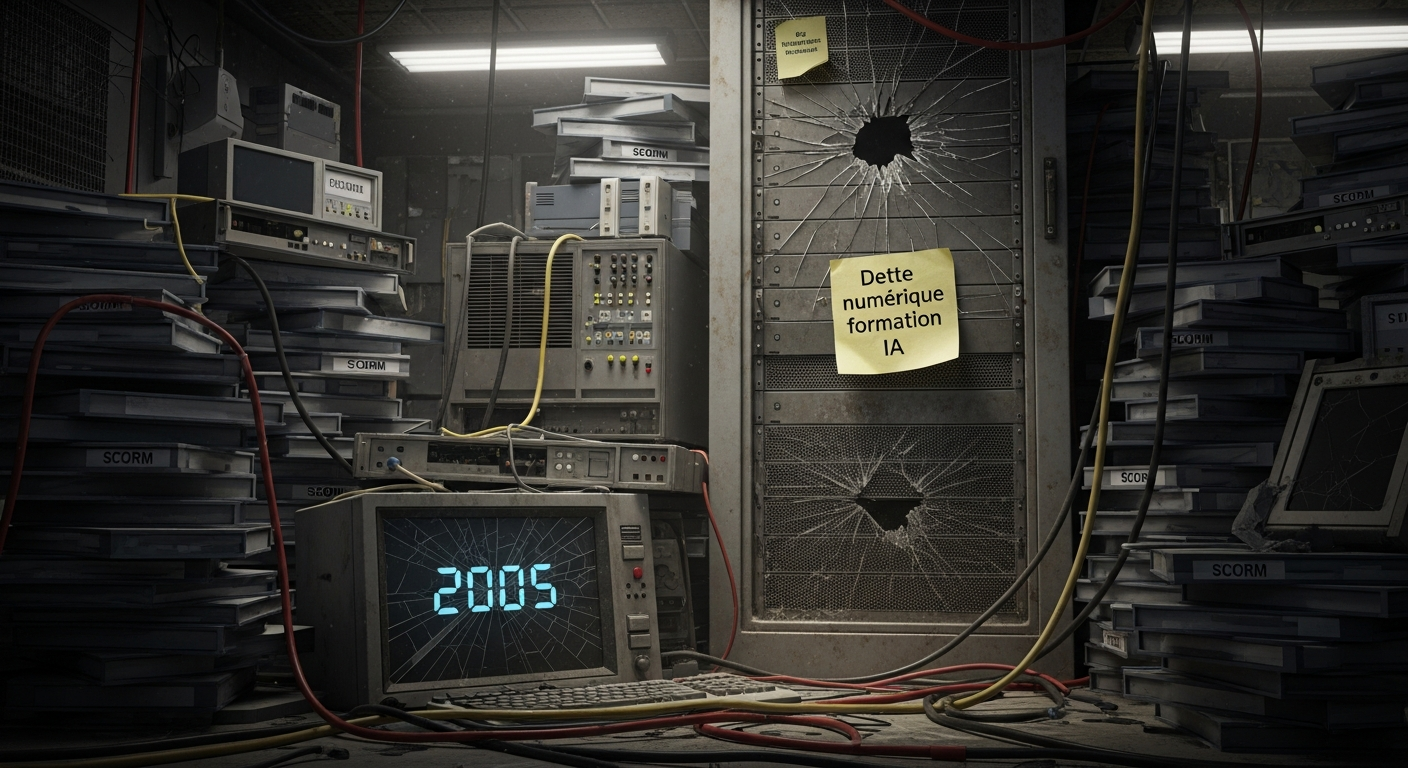

A module can still be there, neatly stored in the LMS, accessible in two clicks, seemingly compliant, and yet no longer help many people. That’s often the sign of a AI training digital debt that has quietly settled in, and that ends up wearing down the value of your content.

Over the years, many e-learning catalogs accumulate a somewhat sneaky kind of debt. Nothing spectacular at first: content no one touches anymore, source files no one can find, an old authoring tool no one dares open again, an agency you have to call back to change three words, formats that still stand up but that everyone avoids handling. On paper, everything works. In practice, the value slips. It erodes. And sometimes it drops off all at once, as soon as a business tool changes, a rule shifts, or an internal process is revised.

The classic reflex is to say: we need to rebuild everything. In reality, not necessarily. Often, it’s even a bad spend. What works best is rather to take what already exists, sort through it, remove what smells like mothballs, update what still holds up, and transform the rest. AI helps enormously with that: rewriting, adaptation, translation, variations, generating dialogues—yes, it saves a ton of time. But it’s only a lever. If the goal is to truly extend the lifespan of a training course, you have to go further: leave overly descriptive content behind and move toward formats where you choose, where you hesitate a bit, where you sometimes get it wrong. In short, formats that train rather than simply explain.

That is precisely where authoring tools designed for scenario-based training become interesting. With a solution like VTS Editor from Serious Factory, a static module can be transformed into decision-based training without a heavy development effort. And that’s not a detail: updates become simpler, maintenance costs less, and training finally looks more like the field than like a digitized document.

Digital debt in training: when debt settles in quietly

Digital debt in training isn’t just a technical story. If only. It would be easier to deal with.

In reality, it mostly comes from small decisions accumulated over time: a tool chosen to move fast, content delivered without thinking about its maintenance, a rigid architecture, a vendor who becomes indispensable, a module designed as a finished object rather than as a living support. Taken separately, nothing dramatic. Together, it ends up blocking everyone.

From afar, though, nothing really raises an alarm.

The modules are there.

They open.

They run.

And then one day you need to correct an instruction, update a screenshot, change terminology, adapt a sales scenario, integrate a new compliance rule, and everything gets complicated.

What should have taken thirty minutes turns into a mini-project. You have to find the files. Figure out which tool it was produced with. Check whether anyone still knows how to use it. Recontact a vendor. Test across multiple browsers. Revalidate in the LMS. Get sign-off. At that point, the debt is no longer a nice abstract concept: it has already started biting into time, budget, and agility.

You often find the same symptoms in training, HR, or L&D teams:

- the source files are gone, or no one really knows how to use them anymore;

- an agency or a very specific profile remains essential for the smallest change;

- rendering works poorly on mobile, or varies by browser;

- learners click through fast, finish fast, and retain little;

- the situations shown no longer have much to do with real day-to-day work.

At the end of the day, the cause is fairly mundane. Many modules were thought of as deliverables to wrap up and then mentally archive. But digital training is nothing like a PDF you drop into a folder. It’s a living asset. And a living asset, if you immobilize it, ages—badly, in general.

Obsolete skills: the real danger is training correctly for situations that no longer exist

The issue isn’t only instructional. It’s very operational. Very business-driven.

A skill becomes obsolete quickly when the practice no longer matches real working conditions. You can have content that is absolutely correct, clean, well-written, validated at the time, and still useless when it’s time to act. It still lays out rules, sure. But it no longer prepares people to decide correctly, at the right time, under constraints.

And in real life, it’s not the slides that come back to mind. It’s reflexes. Landmarks. Trade-offs. What you do when everything doesn’t go as planned.

In some sectors, this gap between training and reality gets expensive, very fast: safety, health, industry, sales, customer relations, cybersecurity, quality. A few months can be enough. An escalation procedure has changed. The module still shows the old one. An instruction has been nuanced. The support still broadcasts an overly simplified version. From there, you’re no longer simply behind. You’re in a risk zone.

The context, moreover, isn’t getting any simpler. The World Economic Forum’s Future of Jobs Report 2023 reminds us: expected skills are evolving rapidly, and upskilling and reskilling needs are becoming increasingly strategic. In short: when jobs shift fast, training systems must be able to keep up without excessive inertia.

Source :

- World Economic Forum, Future of Jobs Report 2023 : https://www.weforum.org/publications/the-future-of-jobs-report-2023/

To go further, on the research side, there are also landmark works on learning through action and feedback, useful for understanding why scenarios better support transfer to the workplace:

- Salas et al., « The Science of Training and Development in Organizations: What Matters in Practice » (Psychological Science in the Public Interest) : https://journals.sagepub.com/doi/10.1177/1529100619826106

AI training digital debt: why so many SCORM modules age the wrong way

SCORM, in itself, isn’t the ideal culprit. But it has to be said: the way it’s often used doesn’t help.

In many companies, you still find the same model. Pages that follow one another. Linear content. Then a final quiz. It’s reassuring, easy to distribute, everyone knows it. But that kind of module mainly conveys information. It trains very little.

As soon as you need to update content often, or prepare someone to decide in an imperfect situation, the page-turner quickly shows its limits.

Let’s take something very basic: a customer complaint. You can list the steps to follow, detail best practices, add two or three watch points. Great. The learner can even remember them. The problem is that on the day a customer gets irritated, or an internal guideline contradicts the situation, or the CRM tool lags or stops responding, you have to decide. And then, the skill is no longer in reciting. It’s in choosing.

That’s the whole difference between a declarative module and a scenario. The first describes. The second works judgment.

Good news: modernizing doesn’t necessarily mean tearing everything down. In many cases, it’s enough to identify decisive moments, the real turning points, then convert them into interactions with consequences, feedback, and branching. It’s less spectacular than a total overhaul, but often smarter.

Training obsolescence: hidden costs beyond the quote

You first think about the maintenance budget. That’s logical. But the heaviest costs are often elsewhere, and sometimes invisible until you tackle the issue head-on.

Business know-how: when critical knowledge gets diluted

Useful knowledge—the real thing—never fits entirely into a procedure. A senior doesn’t just know what to do. They know what to watch. They know when to doubt. They sense that a detail deserves verification before an incident escalates. That kind of finesse doesn’t translate well in a PDF or a frozen slide deck.

A simulation, on the other hand, can make people practice it.

In industrial maintenance, for example, an experienced technician doesn’t always follow the same analysis order. They adapt their diagnosis to clues, revisit priorities, interpret a noise, a smell, a weak symptom. This evolving reasoning is hard to convey with a classic linear module. With a scenario where you choose, get it wrong, adjust, understand why, it immediately becomes more credible.

Engagement: when engagement drops, the rest follows

Learners don’t judge your modules only by comparing them to other modules. They compare them to everything they use daily: mobile apps, video platforms, smooth interfaces, interactive experiences. So when training looks like a somewhat dull administrative formality, the signal is crystal clear: we’re here to check the box, not to learn something useful.

And that feeling leaves very concrete traces. You often see it in populations already under pressure—sales teams, managers, support, IT, production:

- speed-run completions;

- low memorization;

- comments like “too long,” “not concrete,” “not up to date,” “seen it already.”

In other words: training is consumed, not really absorbed.

Compliance: defensible—until the day it isn’t

During an audit, people don’t only ask whether a module was delivered. They also look at its consistency, its date, how well it matches current practices, its evaluation method, and its traceability. Dated content may very well have been completed by everyone and still create a problem: contradictory instructions, obsolete screenshots, outdated rules, ambiguous interpretations.

That’s the kind of gray area that becomes painful to justify, especially when the gaps between content and business reality start becoming visible.

So for a training manager, the challenge isn’t to produce more. It’s to be able to update quickly, cleanly, without excessive friction, and with clear traceability.

AI and updates: fine-grained modernization rather than rebuilding everything

AI is truly useful when it fits into a method. Not when you hand it instructional design the way you delegate a chore.

In many cases, the best option is to do a real facelift on what already exists. Keep what holds up. Remove what no longer makes sense. Strengthen weak points. Above all, add what’s most often missing: interaction, decisions to make, and useful feedback on those decisions.

To start, you don’t need a sprawling program. A simple approach, on a few critical modules, can already move the needle:

- spot the high-stakes modules: risk, volume, onboarding, compliance, business stakes;

- map what has aged: procedures, interfaces, phrasing, screenshots, wording elements, rules;

- identify the moments when the learner truly has to choose, not just remember;

- use AI to speed up everything that can be sped up: rephrasing, scripts, variants, dialogues, translations, adaptations;

- rebuild the format in an authoring tool able to handle scenarios, then observe what it changes: engagement, scores, frequent errors, completion time.

The logic shift is here. You no longer work through big, spaced-out, painful overhauls, but through iterations. The field evolves, training follows faster. That’s often, at the end of the day, what gives a catalog its lifespan back.

Authoring tool and digital debt in training: why VTS Editor simplifies maintenance

VTS Editor’s value isn’t only about styling or visual output. The strong point is more structural: the very way the experience is built.

The principle is based on blocks linked together: information, interaction, media, variables, scores, conditions, branching. This logic makes it possible to start from content that already exists—procedure, guide, presentation, internal documentation, video—then convert it into a practice pathway.

Take a training course on incident handling. You can set the context with a first message block, establish a situation, let a counterpart speak, offer a choice, trigger immediate feedback, measure a skill, then route what comes next based on the decision made. Flags, scores, state checks: all of that makes it possible to build something living without getting into heavy development.

The key point is maintenance. If a procedure evolves, you don’t need to rebuild everything. You adjust a scene, a dialogue, a business rule, a feedback. The overall mechanics stay in place. The structure doesn’t collapse at the first change. And that, frankly, changes life for teams.

Another advantage, and not the least: this maintenance can be taken back in-house. No need to constantly depend on scarce technical resources or external graphic profiles for every small correction.

To discover the solution :

Interactive video: useful when it forces you to choose

Video is everywhere in training. But in many cases, it’s used in a very “well-behaved” way. You watch, you skip ahead, you move on.

The real potential appears when the video stops just before the consequence and asks the learner: “And now, what do you do?”

In safety topics, for example, this mechanic works remarkably well:

- a scene presents a risky situation;

- the video pauses at the decisive moment;

- the learner chooses their action;

- the module shows the consequences and explains the expected rule.

In VTS Editor, this logic is built simply by combining video, interaction, then instructional feedback, possibly with a score or progress state. You’re no longer in passive viewing. You move to consequence-based learning. And it looks much more like real life.

Multilingual and consistency: a good maturity test for AI training digital debt

In multisite or international organizations, digital debt often shows up in a place people rarely inspect: gaps between language versions.

The French version has been updated. The English one is still waiting. The Spanish one still uses an old screenshot. The German one hasn’t incorporated the latest regulatory nuances. And over the months, the versions drift away from one another.

AI clearly reduces translation and rephrasing costs. It’s a real leap forward. But if the project structure is unstable, problems come back quickly: duplicated content, staggered evolutions, local versions each going their own way.

A tool like VTS Editor makes this type of management easier by supporting multilingual projects, as well as text-to-speech voiceover. For training and HR teams, the real benefit isn’t only going faster. It’s keeping a common foundation while leaving room for local adaptations, without losing control of the whole.

A simple diagnosis to see whether your catalog has aged in silence

No need to immediately launch a sprawling audit to know there’s a problem brewing. A few questions are enough to surface signals.

Answer yes or no:

- are your most viewed modules more than three years old?

- does a simple update require a vendor or a tool you don’t control?

- do your learners find the content long, dated, or too theoretical?

- is there little—or no—scenario-based practice in your pathways?

- have some instructions changed so much that you’d hesitate to defend the content in an audit?

If you get four or five yes answers, there’s not much suspense left: it’s better to launch a modernization pilot on a few critical modules rather than wait for the miracle big overhaul.

Frequently asked questions about AI training digital debt

What exactly do we mean by digital debt in training?

It’s the accumulation of content that becomes painful to evolve: lost source files, frozen formats, dependence on a vendor, stiff architecture, aging tools. Little by little, these modules lose their relevance, credibility, and operational value. The effects are well-known: hidden costs, slow updates, a growing gap with what happens in the field.

How do you spot an obsolete module if it still works in the LMS?

The mere fact that it’s accessible doesn’t prove much. What matters is how well it matches business reality. If the examples are dated, screenshots outdated, procedures modified, or the learning experience too linear, obsolescence is already there. Learner feedback often provides very good clues, by the way: boredom, speeding through, low retention, a sense of disconnect.

Can AI, on its own, modernize a training course?

No. It saves precious time on rephrasing, scripts, variants, translations, or certain adaptations. However, a training course’s durability depends mostly on its structure, its level of interactivity, and how easy it is to maintain. If the module stays flat and frozen, you accelerate text production, not really instructional impact.

How do you modernize without rebuilding the whole module?

By doing a targeted facelift. You keep what’s still valid, replace what’s obsolete, then identify the important decisions to train. Next, you turn those decision points into interactions with choices, consequences, and feedback. This approach is often faster, more credible, and closer to real work than a total overhaul.

Why choose VTS Editor over a classic page-turner module?

Because it makes it possible to create interactive, realistic, gamified scenarios via a visual block-based interface, without programming. For a training team, that means more autonomy, less external dependence, and much shorter maintenance cycles. Put differently: a very concrete reduction in digital debt.

Turning your catalog into a living asset

Digital debt isn’t inevitable. Skill obsolescence isn’t either.

The real challenge, for a training, HR, or instructional team, isn’t to keep stacking more modules at an ever faster pace. The challenge is to build a durable capability: update quickly, train decision-making, measure what evolves, stay solid in an audit, bring the catalog to life instead of suffering through it.

Yes, AI speeds things up. Obviously. But what makes training durable isn’t only writing speed. It’s a design that’s built to evolve, be maintained, be transformed without pain. That’s exactly what an authoring tool like VTS Editor from Serious Factory enables: creating interactive experiences, scenarios, and serious games that can be exported—especially as SCORM—without requiring advanced technical or graphic skills.

And, very often, that’s how you finally get out of the exhausting cycle of massive reworks: not by starting from scratch every three years, but by making what already exists truly transformable.

To discover concrete examples and field feedback:

- Client Cases – Discover their success with Virtual Training Suite

- Thales: a large-scale cybersecurity serious game

- Success Stories: what training teams take away after rollout

- VTS Perform: deployment, tracking, and skills assessment

To extend the reflection:

- Serious game en formation : usages, exemples et ROI

- Mise en situation e-learning : concevoir des scenarios qui changent les comportements

- Outil auteur e-learning : criteres de choix pour responsables formation

- SCORM et LMS : comment assurer le suivi et la tracabilite des formations

Additional source:

- World Economic Forum, Future of Jobs Report 2023 : https://www.weforum.org/publications/the-future-of-jobs-report-2023/